Multi-node GPU Cluster

Multiple GPUs for Large-Scale, High-Performance AI Computing

Overview

-

Easy-to-use GPU Architecture

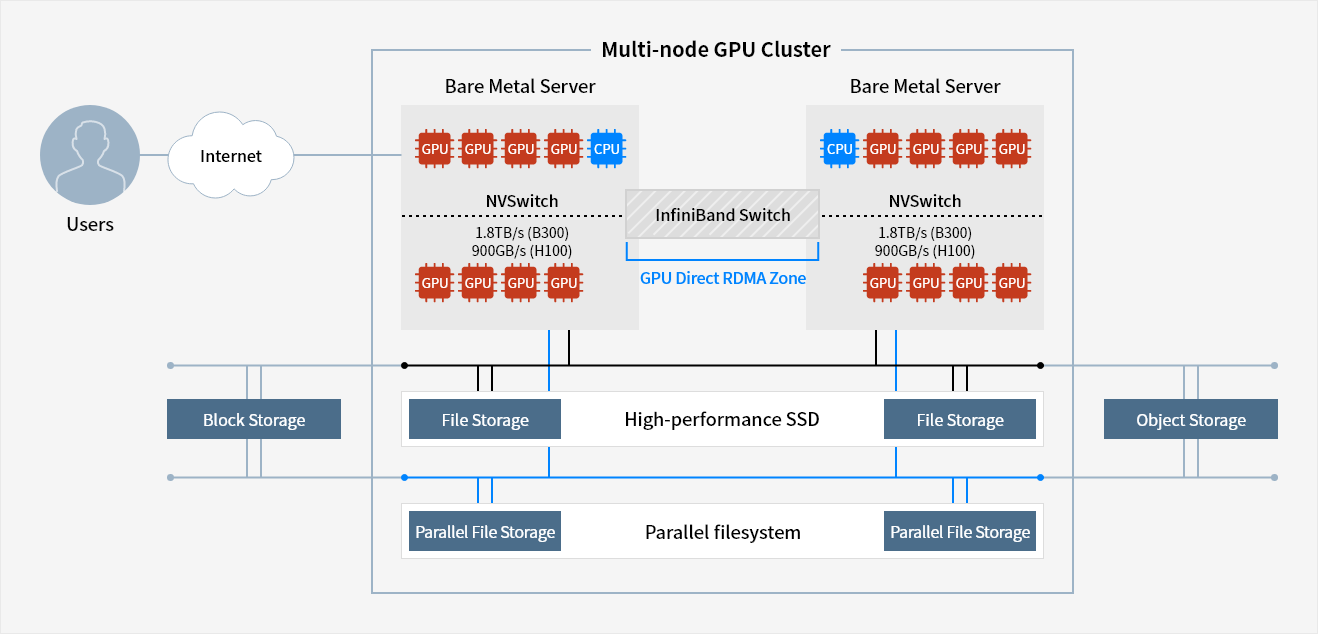

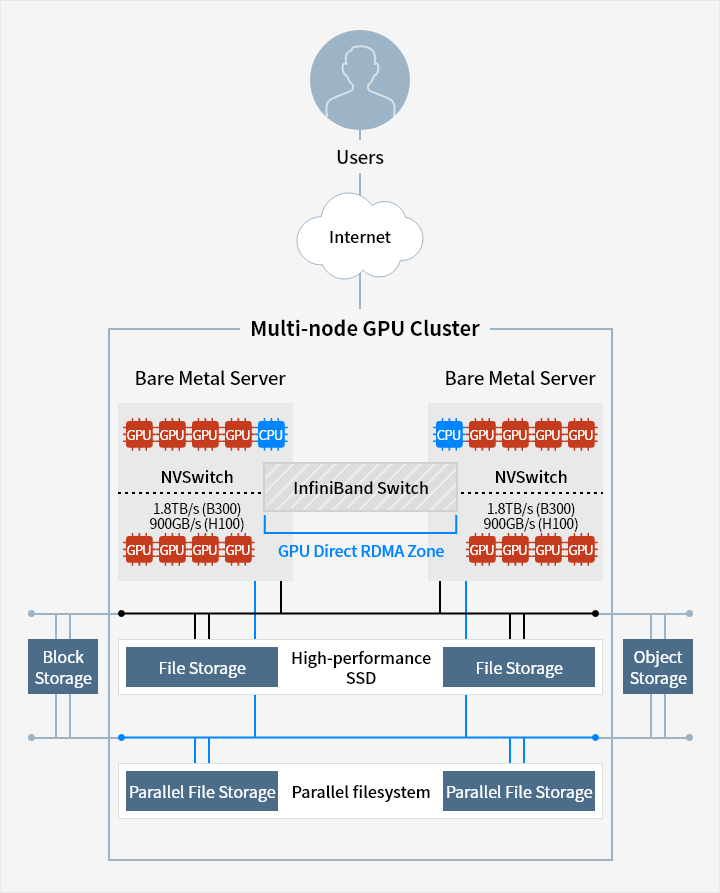

Multi-node GPU Cluster uses Bare Metal Server, which is embedded with highperformance NVIDIA SuperPOD architecture. Using the GPU, it handles multiple user jobs or high-performance distributed workload of large-scale AI model learning.

-

Integration with High-Performance Network

Through integration with the network resources of Samsung Cloud Platform, Multinode GPU Cluster can handle high-performance AI jobs. By configuring GPU direct RDMA (Remote Direct Memory Access) using InfiniBand switch, it directly processes data IO between GPU memories, enabling high-speed AI/Machine learning computation.

-

Integration with High-Performance Storage

Multi-node GPU Cluster supports integration with various storage resources on the Samsung Cloud Platform.

A high-performance SSD File Storage directly integrated with high-speed network or NVMe Parallel File Storage is available for use, and integration with Block Storage and Object Storage is possible.

Service Architecture

Block Storage - Multi-node GPU Cluster[ File Storage - High-performance SSD - File System] - Objeact Storage(BM)

Block Storage - Multi-node GPU Cluster[Parallel File Storage - Parallel filesystem - Parallel File Storage] - Objeact Storage(BM)

Multi-node GPU Cluster

NVSwitch

1.8TB/s(B300)

900GB/s(H100)

GPU GPU GPU GPU

NVSwitch

1.8TB/s(B300)

900GB/s(H100)

GPU GPU GPU GPU

Key Features

-

Create/manage GPU Bare Metal Server

- Standard GPU Bare Metal Server with 8 NVIDIA GPUs(B300, H100)

※ Internal NVMe disk, NVIDIA NVSwitch and NVIDIA NVLink - Provide OS standard image of RDMA SW Stack (OS : Ubuntu)

- Standard GPU Bare Metal Server with 8 NVIDIA GPUs(B300, H100)

-

High performance processing

- Configure GPU direct RDMA environment using InfiniBand switch

- Provide high-performance SSD File Storage

- Provide NVMe Parallel File Storage

-

Storage and network integration

- Provide additional storage(Block, Object, File) and network connection on top of an OS disk

- Integration setting for subnet/IP and VPC Firewall

Whether you’re looking for a specific business solution or just need some questions answered, we’re here to help