In a recent interview with Bloomberg, Microsoft CEO Satya Nadella revealed his ambition to challenge Google’s dominance in the search engine market by adding ChatGPT to its Bing search engine. Meanwhile, Google CEO Sundar Pichai unveiled Bard, a conversational AI service to take on ChatGPT. In addition, it was reported that ChatGPT attracted 100 million users in just two months after its launch. Global tech companies have been aggressively developing and launching AI solutions. Confirming this intense competition, Forbes reported, “The new AI wars have begun.”

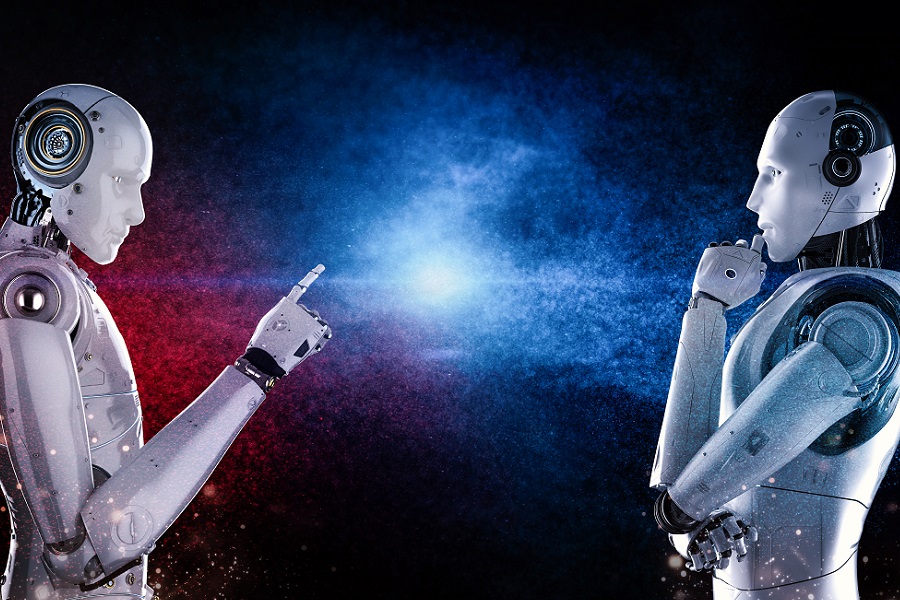

As AI is being developed at hair-raising speeds, it now seems the “Turing Test” (a test of a machine's ability to exhibit intelligent behavior equivalent to that of humans) has become meaningless. This is because AI chatbots can now provide answers that are at a higher level than humans, making the test meaningless.

ChatGPT with Boundless Potential

Unlike existing chatbots, ChatGPT writes like a human by learning extensive information on the Internet. Recently, ChatGPT was competent enough to pass exams for a medical license, law exams, and a business management course exam, making ChatGPT appear to have professional capabilities. In this regard, academic circles are concerned that many students will likely write or plagiarize a thesis using ChatGPT. Indeed, it was reported that some international school students in Korea were found to have written English essays using ChatGPT, thus resulting in a failed grade.

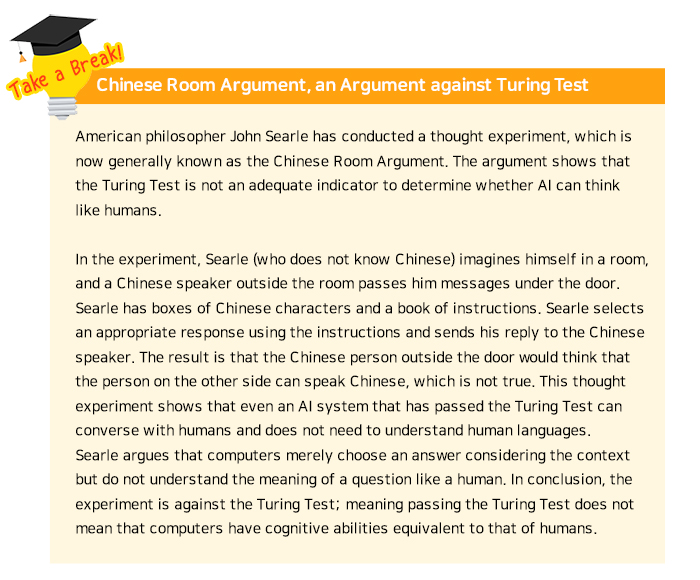

ChatGPT has yet to get the latest information because its knowledge is restricted to things it learned before 2021. We asked a rather difficult question to test ChatGPT’s competence. When we asked ChatGPT regarding its opinion concerning people abusing it for plagiarism or medical purposes, ChatGPT gave the following answer:

What do you think? Can you believe it took less than one minute to generate this clear answer? If ChatGPT can perform at this high level, then I don’t need to write anything else in the near future. One media outlet reported that in two years, about 90% of all content would be produced by AI.

But there are concerns that generative AIs like ChatGPT might exponentially increase “garbage data” online. ChatGPT has an outstanding writing ability that can even deceive experts, but there is a limit. It can only generate output with limited data.

Big Tech AI War Triggered by ChatGPT … Competition Between Google’s Bard and Microsoft’s Bing

OpenAI’s ChatGPT uses a reward learning model based on feedback provided on answers. Therefore, it can make continuous improvements if a large amount of learning data and proper feedback are provided. Indeed, the reinforced learning model, Reinforcement Learning from Human Feedback (RLHF), is assessed using human feedback, and the model is used for robot control, game AI and autonomous cars, etc. However, human feedback is essential. Output significantly varies depending on how reward functions are designed, which comes at a high monetary cost. Therefore, ChatGPT may obtain feedback with learning results from other AIs, like AlphaGo did, to reduce time and costs.

As mentioned, Google unveiled the new chatbot Bard, in Paris, France, on February 8th. Google has been an AI technology leader among big global tech companies. However, on launch day when Bard was asked, "What discoveries from the James Webb Space Telescope can I tell my 9-year-old about?" Bard incorrectly stated that the James Webb Space Telescope took the very first pictures of an exoplanet outside our solar system. This inaccuracy made Google’s share price plummet by almost 8%. But considering that Google changed the AI paradigm through AlphaGo, Google will probably soon introduce a near-perfect version.

On February 7th, Microsoft launched a new version of its Bing search engine with built-in support for ChatGPT. Microsoft originally planned to unveil the service one month later but announced it earlier, maybe because Google said the previous day that it would reveal a similar conversational Bard AI service within the month. When a user asks a question, Bing answers as if conversing with the user. Following its previous one-billion-dollar investment in OpenAI, Microsoft will invest 10 billion dollars in March 2023. With ChatGPT built in, Bing will be able to answer questions through conversations and even provide consulting services. There are high expectations about how Google and Microsoft, two significant players in the search engine market, will change the landscape of the conversational AI market.

What Do You Think the AI Era Will Look Like in the Future?

The future generation will grow into AI natives who can learn from, converse and enjoy free time with AI. This also means obtaining knowledge will be much easier. A senior researcher at NIA said, “Humans have accumulated their own experiences directly or through the experiences of others delivered via the media. But AI services have changed the definition of knowledge by overhauling the human learning process.”

It seems AI can replace simple (e.g., information retrieval or customer response at a call center) and complicated tasks (e.g., psychological therapy to treat PTSD) alike. ChatGPT can develop a program that can be created by a developer who earns more than 150 thousand dollars a year. Maybe I should be concerned about my job.

Who do you think will win the AI war? Regardless of the winner, we should carefully look at how AI will change the world. I hope the way forward for the business will be more human-centered.

.jpg?queryString=20250410013411)