The previous article introduced deepfakes, which are synthesized images, videos, and other media by the Artificial Intelligence (AI). Aren’t you interested to find out how media was tampered with before the deepfake technology?

Prior to the emergence of AI technology, traditional methods were used for image and video forgery. For example, video editing was mainly used to create video that intentionally distort video contents and image editor programs were used to make composite images for inappropriate purposes. Going back further, people used to manually edit documents or images by faking someone’s signature on a contract or drawing a mustache on a photo. Nowadays, as digital forgery and falsification methods have developed significantly, people started to use specialized programs to edit contents.

This kind of tampering is called cheapfake, meaning altered media by human with conventional and affordable technologies without much time and effort. It is also called a shallowfake as opposed to a deepfake.

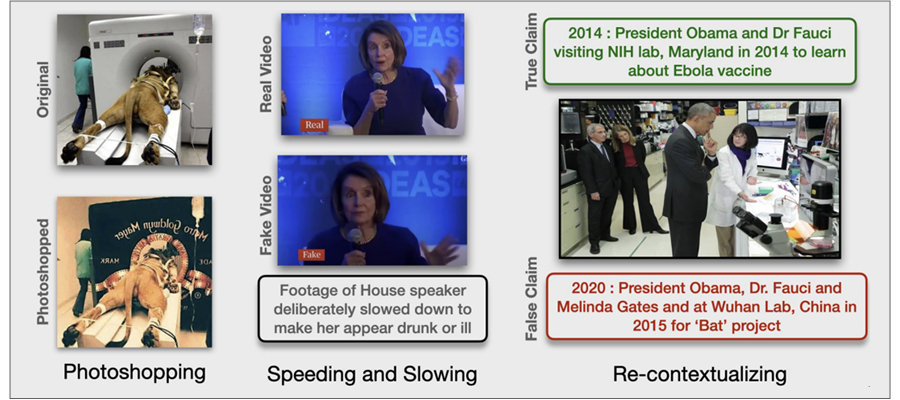

- Photoshopping: original → photoshopped

- Speeding and Slowing : Real Video →Fake Video - Footage of House speaker deliverately slowed down to make her appear drunk or ill

- Re-contextualizing : True Claim : 2014 : President Obama and Dr Fauci visiting NIH lab, Maryland in 2014 to learn about Ebola vaccine → False Claim : 2020 : President Obama, Dr.Fauci and Melinda Gates and at Wuhan Lab, China in 2015 for Bat project

(Left) Photoshopped image falsely portraying the filming of MCM’s iconic roaring lion bumper

(Middle) Interview footage of House Speaker Nancy Pelosi intentionally slowed down to make her appear drunk or ill

(Right) An image of former U.S. President Obama listening to an explanation of the Ebola vaccine at the NIH lab, Maryland (2014). The image was falsely claimed to make Obama appear to be listening to a bat project at the Wuhan Lab in China (2020)

Source: MMSys’21 Grand Challenge on Detecting Cheapfakes, https://arxiv.org/pdf/2107.05297.pdf

As online and offline boundaries are blurred and contact-free lifestyles have become the new normal, various forms of communication and commercial transactions are taking place online. In particular, various types of files are transmitted on the internet, ranging from important documents containing personal information and contracts to daily photos shared with friends. As digital media has a growing influence several aspects of our daily lives, there is an increasing need for technology to distinguish forgery to make sure the files we receive are not tampered.

Currently, cheapfake media detection technology is being actively researched for various purposes in different areas. Following is an actual project case done by Team9.

Team9 Understands the Customer and Defines the Cheapfake Issue

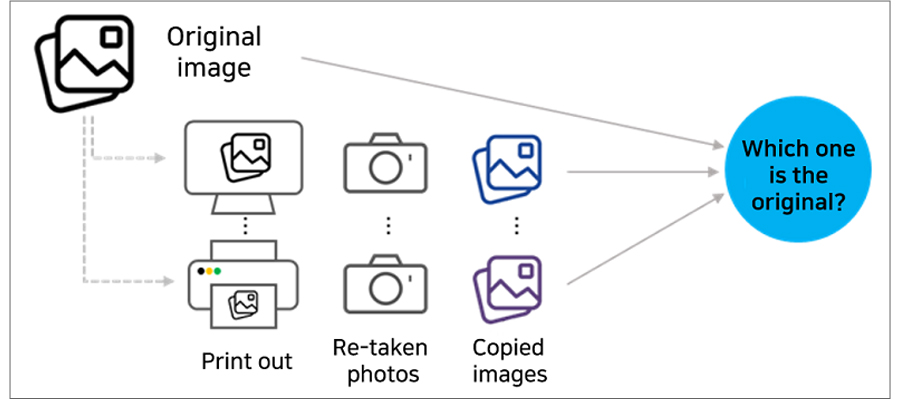

Cheapfake media vary in types and forms, so it is necessary to clearly define the scope of target and format and utilize relevant technologies depending on the purpose for detection. The cheapfake media files given to Team9 were photos of a printed image (Let’s call them “copied images” for convenience).

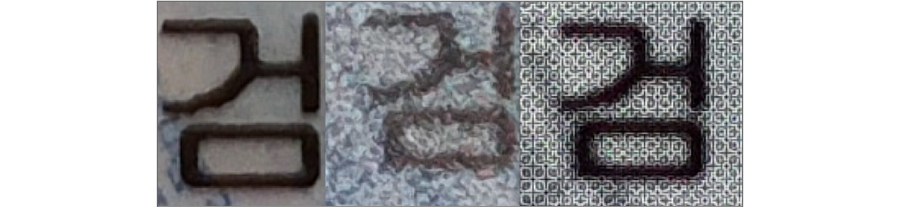

First, the team analyzed images to be detected to define the issue. They looked quite similar, but when zoomed in, differences were found. Figure 3 below is the comparison among the enlarged images of the original and copied images. Can you see the differences between them?

Each image shows a certain pattern. To more accurately identify such patterns, the team extracted their patterns and features using AI.

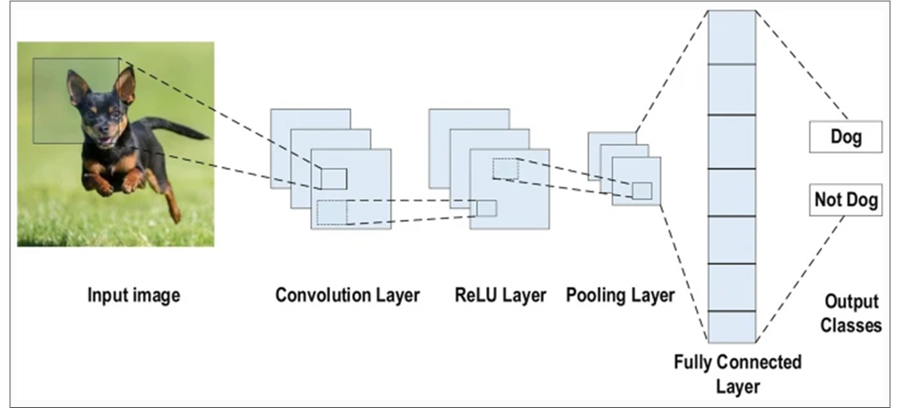

Team9 defined the issue as an image classification problem and decided to solve it by using the Convolutional Neural Network (CNN). CNN is a type of an artificial neural network (ANN), the most commonly used solution to analyze visual imagery, such as searching for a specific object from images/videos or extracting patterns. The algorithm is widely used in computer vision applications.

(Source: Laith Alzubaidi, Review of deep learning: concepts, CNN architectures, challenges, applications, future directions, https://journalofbigdata.springeropen.com/articles/10.1186/s40537-021-00444-8)

Efforts To Solve Perceived Problems: Hypothesis Presentation, Failure, And Repeated Correction

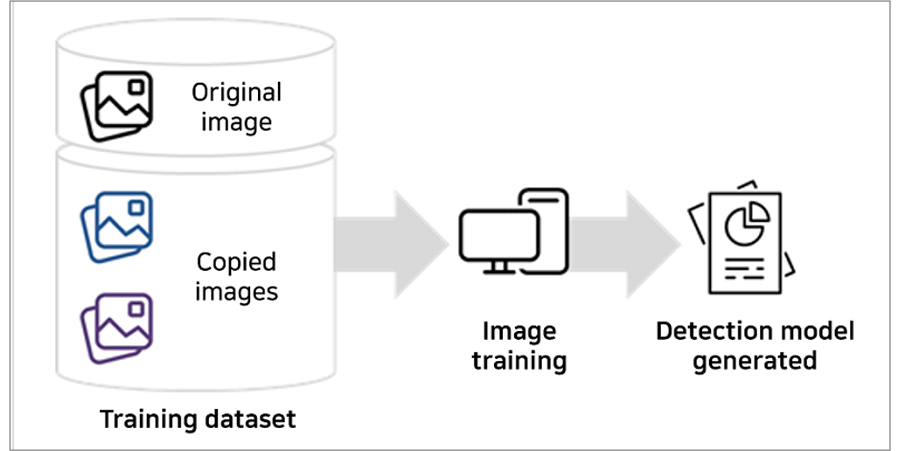

Team9 produced a CNN-based detection model by taking thousands of photos of original and copied images and developing a training dataset.

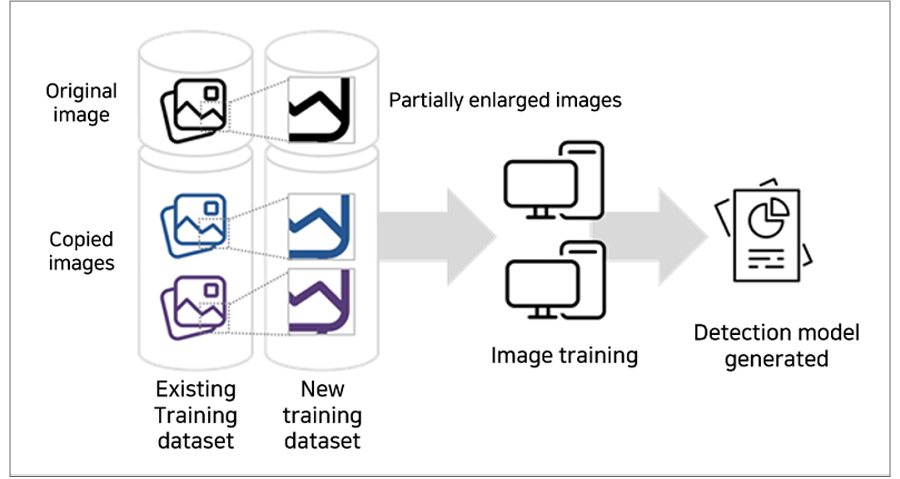

However, the initial models that were created through this process were not very accurate. The algorithm learned the dataset by focusing on the whole images, failing to learn the minor features seen in Figure 3. In other words, when a model learns images, it should be induced to pay attention to even small features in the subject.

The project team added a training dataset by enlarging some of the existing training images to capture even the smallest features to solve this problem.

The team also changed the model structure to be able to train the model using the added training dataset. Through this trial and error, we enhanced the accuracy to a higher-than-average level, thus resolving the problem.

Above is one of the methods of solving problems to detect cheapfake media. Cheapfake media is generated in various forms across many sectors. As such, various approaches and technologies for detecting cheapfakes are developed as well. Team Nine is also working on developing and improving technologies to respond to digital tampering.

In the next installment, we will learn more about blocking tampered images in advance.

※ This article was written based on objective research outcomes and facts, which were available on the date of writing this article, but the article may not represent the views of the company.

- AI Ethics and AI Governance - The Social Responsibility of AI

- Multimodal AI That Thinks Like Humans

- Damages of Media Forgery and Companies that Offer Forgery Prevention Technologies

- Brightics Visual Search Claims 6th Place in NIST FRVT “Face Mask Effects” Category

- Cheapfakes on the Rise in Zero Contact Environments

- Is the Media You Are Watching "Real"?